Apache Kafka(Zookeeper)를 클러스터 구성하는 방법

구성 환경

$ cat /etc/redhat-release

CentOS Linux release 7.9.2009 (Core)

$ getconf LONG_BIT

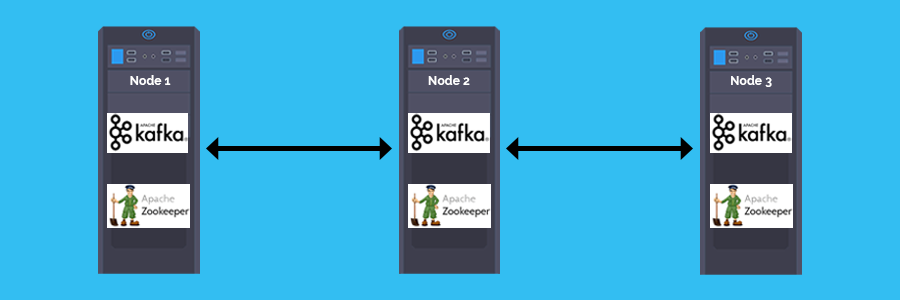

64카프카 클러스터 다이어그램

Apache Kafka(Zookeeper)를 클러스터 구성하는 방법

java 설치

$ yum install -y java-11-openjdk.x86_64$ java --version

openjdk 11.0.13 2021-10-19 LTS

OpenJDK Runtime Environment 18.9 (build 11.0.13+8-LTS)

OpenJDK 64-Bit Server VM 18.9 (build 11.0.13+8-LTS, mixed mode, sharing)주키퍼 클러스터 구성(Zookeeper Cluster on Multi Node)

zookeeper 다운로드

https://www.apache.org/dyn/closer.lua/zookeeper/zookeeper-3.7.0/apache-zookeeper-3.7.0-bin.tar.gz

$ cd /usr/local/src/

$ wget https://dlcdn.apache.org/zookeeper/zookeeper-3.7.0/apache-zookeeper-3.7.0-bin.tar.gz

$ tar xfz apache-zookeeper-3.7.0-bin.tar.gz

$ mv apache-zookeeper-3.7.0-bin /usr/local/zookeeperzookeeper(zoo.cfg) 설정

$ cp /usr/local/zookeeper/conf/zoo_sample.cfg /usr/local/zookeeper/conf/zoo.cfg

$ vim /usr/local/zookeeper/conf/zoo.cfg

$ cd /usr/local/zookeeperzoo_sample.cfg 기본 설정 파일

$ vim conf/zoo_sample.cfg

# The number of milliseconds of each tick

tickTime=2000

# The number of ticks that the initial

# synchronization phase can take

initLimit=10

# The number of ticks that can pass between

# sending a request and getting an acknowledgement

syncLimit=5

# the directory where the snapshot is stored.

# do not use /tmp for storage, /tmp here is just

# example sakes.

dataDir=/tmp/zookeeper

# the port at which the clients will connect

clientPort=2181

# the maximum number of client connections.

# increase this if you need to handle more clients

#maxClientCnxns=60

#

# Be sure to read the maintenance section of the

# administrator guide before turning on autopurge.

#

# http://zookeeper.apache.org/doc/current/zookeeperAdmin.html#sc_maintenance

#

# The number of snapshots to retain in dataDir

#autopurge.snapRetainCount=3

# Purge task interval in hours

# Set to "0" to disable auto purge feature

#autopurge.purgeInterval=1

## Metrics Providers

#

# https://prometheus.io Metrics Exporter

#metricsProvider.className=org.apache.zookeeper.metrics.prometheus.PrometheusMetricsProvider

#metricsProvider.httpPort=7000

#metricsProvider.exportJvmInfo=truezoo.cfg 파일 편집

#server.1

$ vim conf/zoo.cfg

tickTime=2000

initLimit=10

syncLimit=5

dataDir=/var/lib/zookeeper

dataLogDir=/var/log/zookeeper

clientPort=2181

4lw.commands.whitelist=*

server.1=datanode01:2888:3888

server.2=datanode02:2888:3888

server.3=datanode03:2888:3888

#server.2

$ vim conf/zoo.cfg

tickTime=2000

initLimit=10

syncLimit=5

dataDir=/var/lib/zookeeper

dataLogDir=/var/log/zookeeper

clientPort=2181

4lw.commands.whitelist=*

server.1=datanode01:2888:3888

server.2=datanode02:2888:3888

server.3=datanode03:2888:3888

#server.3

$ vim conf/zoo.cfg

tickTime=2000

initLimit=10

syncLimit=5

dataDir=/var/lib/zookeeper

dataLogDir=/var/log/zookeeper

clientPort=2181

4lw.commands.whitelist=*

server.1=datanode01:2888:3888

server.2=datanode02:2888:3888

server.3=datanode03:2888:3888zookeeper 데이터 및 로그 디렉토리 생성

$ mkdir -p /var/lib/zookeeper /var/log/zookeepermyid 파일 생성

#server.1(192.168.0.101)

$ echo 1 > /var/lib/zookeeper/myid

#server.2(192.168.0.102)

$ echo 2 > /var/lib/zookeeper/myid

#server.3(192.168.0.103)

$ echo 3 > /var/lib/zookeeper/myid주키퍼 start

$ ./bin/zkServer.sh start주키퍼 stop

$ ./bin/zkServer.sh stophttps://zookeeper.apache.org/doc/r3.6.3/zookeeperTools.html

주키퍼 모드 확인

- Mode : leader, follower

#server.1

$ ./bin/zkServer.sh status

/bin/java

ZooKeeper JMX enabled by default

Using config: /usr/local/zookeeper/bin/../conf/zoo.cfg

Client port found: 2181. Client address: localhost. Client SSL: false.

Mode: follower

#server.2

$ ./bin/zkServer.sh status

/bin/java

ZooKeeper JMX enabled by default

Using config: /usr/local/zookeeper/bin/../conf/zoo.cfg

Client port found: 2181. Client address: localhost. Client SSL: false.

Mode: follower

#server.3

$ ./bin/zkServer.sh status

/bin/java

ZooKeeper JMX enabled by default

Using config: /usr/local/zookeeper/bin/../conf/zoo.cfg

Client port found: 2181. Client address: localhost. Client SSL: false.

Mode: leaderzookeeper 4 letters words 관리자 명령

zookeeper 버전

$ echo srvr | nc localhost 2181

Zookeeper version: 3.7.0-e3704b390a6697bfdf4b0bef79e3da7a4f6bac4b, built on 2021-03-17 09:46 UTC

Latency min/avg/max: 0/0.3549/3

Received: 3176

Sent: 3192

Connections: 1

Outstanding: 0

Zxid: 0x4000000ba

Mode: follower

Node count: 30$ echo mntr | nc localhost 2181 | more

zk_version 3.7.0-e3704b390a6697bfdf4b0bef79e3da7a4f6bac4b, built on 2021-03-17 09:46 UTC

zk_server_state follower

zk_peer_state following - broadcast4lw (Apache ZooKeeper-specific) : https://zookeeper.apache.org/doc/current/zookeeperAdmin.html#sc_zkCommands

카프카 클러스터 구성(Kafka(broker) Cluster on Multi Node)

kafka 다운로드

https://kafka.apache.org/downloads

$ wget https://dlcdn.apache.org/kafka/3.0.0/kafka_2.13-3.0.0.tgz$ tar xfz kafka_2.13-3.0.0.tgz

$ cd kafka_2.13-3.0.0/

$ ls

bin config libs LICENSE licenses NOTICE site-docs

$ mv kafka_2.13-3.0.0 /usr/local/kafkaKafka 설정

server.properties 기본 설정

# Licensed to the Apache Software Foundation (ASF) under one or more

# contributor license agreements. See the NOTICE file distributed with

# this work for additional information regarding copyright ownership.

# The ASF licenses this file to You under the Apache License, Version 2.0

# (the "License"); you may not use this file except in compliance with

# the License. You may obtain a copy of the License at

#

# http://www.apache.org/licenses/LICENSE-2.0

#

# Unless required by applicable law or agreed to in writing, software

# distributed under the License is distributed on an "AS IS" BASIS,

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

# See the License for the specific language governing permissions and

# limitations under the License.

# see kafka.server.KafkaConfig for additional details and defaults

############################# Server Basics #############################

# The id of the broker. This must be set to a unique integer for each broker.

broker.id=0

############################# Socket Server Settings #############################

# The address the socket server listens on. It will get the value returned from

# java.net.InetAddress.getCanonicalHostName() if not configured.

# FORMAT:

# listeners = listener_name://host_name:port

# EXAMPLE:

# listeners = PLAINTEXT://your.host.name:9092

#listeners=PLAINTEXT://:9092

# Hostname and port the broker will advertise to producers and consumers. If not set,

# it uses the value for "listeners" if configured. Otherwise, it will use the value

# returned from java.net.InetAddress.getCanonicalHostName().

#advertised.listeners=PLAINTEXT://your.host.name:9092

# Maps listener names to security protocols, the default is for them to be the same. See the config documentation for more details

#listener.security.protocol.map=PLAINTEXT:PLAINTEXT,SSL:SSL,SASL_PLAINTEXT:SASL_PLAINTEXT,SASL_SSL:SASL_SSL

# The number of threads that the server uses for receiving requests from the network and sending responses to the network

num.network.threads=3

# The number of threads that the server uses for processing requests, which may include disk I/O

num.io.threads=8

# The send buffer (SO_SNDBUF) used by the socket server

socket.send.buffer.bytes=102400

# The receive buffer (SO_RCVBUF) used by the socket server

socket.receive.buffer.bytes=102400

# The maximum size of a request that the socket server will accept (protection against OOM)

socket.request.max.bytes=104857600

############################# Log Basics #############################

# A comma separated list of directories under which to store log files

log.dirs=/tmp/kafka-logs

# The default number of log partitions per topic. More partitions allow greater

# parallelism for consumption, but this will also result in more files across

# the brokers.

num.partitions=1

# The number of threads per data directory to be used for log recovery at startup and flushing at shutdown.

# This value is recommended to be increased for installations with data dirs located in RAID array.

num.recovery.threads.per.data.dir=1

############################# Internal Topic Settings #############################

# The replication factor for the group metadata internal topics "__consumer_offsets" and "__transaction_state"

# For anything other than development testing, a value greater than 1 is recommended to ensure availability such as 3.

offsets.topic.replication.factor=1

transaction.state.log.replication.factor=1

transaction.state.log.min.isr=1

############################# Log Flush Policy #############################

# Messages are immediately written to the filesystem but by default we only fsync() to sync

# the OS cache lazily. The following configurations control the flush of data to disk.

# There are a few important trade-offs here:

# 1. Durability: Unflushed data may be lost if you are not using replication.

# 2. Latency: Very large flush intervals may lead to latency spikes when the flush does occur as there will be a lot of data to flush.

# 3. Throughput: The flush is generally the most expensive operation, and a small flush interval may lead to excessive seeks.

# The settings below allow one to configure the flush policy to flush data after a period of time or

# every N messages (or both). This can be done globally and overridden on a per-topic basis.

# The number of messages to accept before forcing a flush of data to disk

#log.flush.interval.messages=10000

# The maximum amount of time a message can sit in a log before we force a flush

#log.flush.interval.ms=1000

############################# Log Retention Policy #############################

# The following configurations control the disposal of log segments. The policy can

# be set to delete segments after a period of time, or after a given size has accumulated.

# A segment will be deleted whenever *either* of these criteria are met. Deletion always happens

# from the end of the log.

# The minimum age of a log file to be eligible for deletion due to age

log.retention.hours=168

# A size-based retention policy for logs. Segments are pruned from the log unless the remaining

# segments drop below log.retention.bytes. Functions independently of log.retention.hours.

#log.retention.bytes=1073741824

# The maximum size of a log segment file. When this size is reached a new log segment will be created.

log.segment.bytes=1073741824

# The interval at which log segments are checked to see if they can be deleted according

# to the retention policies

log.retention.check.interval.ms=300000

############################# Zookeeper #############################

# Zookeeper connection string (see zookeeper docs for details).

# This is a comma separated host:port pairs, each corresponding to a zk

# server. e.g. "127.0.0.1:3000,127.0.0.1:3001,127.0.0.1:3002".

# You can also append an optional chroot string to the urls to specify the

# root directory for all kafka znodes.

zookeeper.connect=localhost:2181

# Timeout in ms for connecting to zookeeper

zookeeper.connection.timeout.ms=18000

############################# Group Coordinator Settings #############################

# The following configuration specifies the time, in milliseconds, that the GroupCoordinator will delay the initial consumer rebalance.

# The rebalance will be further delayed by the value of group.initial.rebalance.delay.ms as new members join the group, up to a maximum of max.poll.interval.ms.

# The default value for this is 3 seconds.

# We override this to 0 here as it makes for a better out-of-the-box experience for development and testing.

# However, in production environments the default value of 3 seconds is more suitable as this will help to avoid unnecessary, and potentially expensive, rebalances during application startup.

group.initial.rebalance.delay.ms=0kafka 로그 디렉토리 생성

$ mkdir /var/lib/kafkaserver.properties 편집

#server.1

$ vim config/server.properties

broker.id=1

listeners=PLAINTEXT://:9092

advertised.listeners=PLAINTEXT://192.168.0.101:9092

num.network.threads=3

num.io.threads=8

socket.send.buffer.bytes=102400

socket.receive.buffer.bytes=102400

socket.request.max.bytes=104857600

log.dirs=/var/lib/kafka

num.partitions=1

num.recovery.threads.per.data.dir=1

offsets.topic.replication.factor=1

transaction.state.log.replication.factor=1

transaction.state.log.min.isr=1

log.retention.hours=168

log.segment.bytes=1073741824

log.retention.check.interval.ms=300000

zookeeper.connect=192.168.0.101:2181,192.168.0.102:2181,192.168.0.103:2181

zookeeper.connection.timeout.ms=18000

group.initial.rebalance.delay.ms=0

#server.2

$ vim config/server.properties

broker.id=2

listeners=PLAINTEXT://:9092

advertised.listeners=PLAINTEXT://192.168.0.103:9092

num.network.threads=3

num.io.threads=8

socket.send.buffer.bytes=102400

socket.receive.buffer.bytes=102400

socket.request.max.bytes=104857600

log.dirs=/var/lib/kafka

num.partitions=1

num.recovery.threads.per.data.dir=1

offsets.topic.replication.factor=1

transaction.state.log.replication.factor=1

transaction.state.log.min.isr=1

log.retention.hours=168

log.segment.bytes=1073741824

log.retention.check.interval.ms=300000

zookeeper.connect=192.168.0.101:2181,192.168.0.102:2181,192.168.0.103:2181

zookeeper.connection.timeout.ms=18000

group.initial.rebalance.delay.ms=0

#server.3

$ vim config/server.properties

broker.id=3

listeners=PLAINTEXT://:9092

advertised.listeners=PLAINTEXT://192.168.0.103:9092

num.network.threads=3

num.io.threads=8

socket.send.buffer.bytes=102400

socket.receive.buffer.bytes=102400

socket.request.max.bytes=104857600

log.dirs=/var/lib/kafka

num.partitions=1

num.recovery.threads.per.data.dir=1

offsets.topic.replication.factor=1

transaction.state.log.replication.factor=1

transaction.state.log.min.isr=1

log.retention.hours=168

log.segment.bytes=1073741824

log.retention.check.interval.ms=300000

zookeeper.connect=192.168.0.101:2181,192.168.0.102:2181,192.168.0.103:2181

zookeeper.connection.timeout.ms=18000

group.initial.rebalance.delay.ms=0kafka 버전

$ find /usr/local/kafka/ -name \*kafka_\* | head -1 | grep -o '\kafka[^\n]*' | awk -F'-' {'print $2'} | cut -d '.' -f 1-3

3.0.0카프카 start

$ bin/kafka-server-start.sh -daemon config/server.properties카프카 stop

$ bin/kafka-server-stop.sh클러스터 브로커 리스트 확인

$ bin/kafka-broker-api-versions.sh --bootstrap-server datanode01:9092 | grep id | awk '{print $(NF-6)}'

192.168.0.101:9092

192.168.0.103:9092

192.168.0.102:9092

참고URL

- jwpark06.log : Kafka 시스템 구조 알아보기

'리눅스' 카테고리의 다른 글

| kcat(kafkacat) 명령어 (0) | 2021.12.22 |

|---|---|

| 카프카 producer와 consumer 테스트 (0) | 2021.12.22 |

| docker-compose를 사용하여 ngrinder 컨트롤러 및 에이전트를 설정하는 방법 (0) | 2021.12.21 |

| Let's Encrypt(certbot)에서 SSL 인증서를 발급받는 방법 (0) | 2021.12.16 |

| [명령어] find 명령어 (0) | 2021.12.15 |